I've been working on making TESSERA map embeddings even easier to retrieve, so that we can build dynamic user interfaces in the browser or on mobile phones.

When we first released GeoTessera last year, every 0.1° tile was a pair of numpy files; one quantized embedding and one scale array. That worked fine for grabbing a few tiles at a time, but our push for global coverage from 2017-2025 is producing around 1.8 million tiles per year, each weighing in at around 150MB! Serving these over HTTP means a million small directories on disk, and every client that wants a contiguous region needs to discover, fetch and stitch dozens of them, and has a minimum download of 150MB.

To fix this, we need to rethink the structure of the storage entirely: it's quite tricky to support both a small download (e.g. from a mobile phone) and also a large region from a cloud provider. Luckily, there's a new cloud-native streaming format in town that's just the ticket, known as Zarr. Since the GeoTESSERA 0.7 release where we first added basic Zarr support, I've been working on consolidating all our tiles into a single sharded Zarr v3 store per year.

This post explains the TESSERA Zarr conventions proposal and why the chunking size choices matter. I'd also love to get feedback from experienced geospatial gurus, so this post is also an RFC of sorts.

1 Why Zarr v3?

Zarr is a format for large N-dimensional typed arrays designed for cloud object stores. It's great because it allows multidimensional arrays to be accessed via HTTP, meaning that normal S3 or HTTP static servers are sufficient for hosting large datasets.

I built a first prototype a few weeks ago using Zarr v2, and mapped the existing npy tile format we use to it. This collects up batches of 10m2 pixel embeddings into larger tiles, which can be downloaded as a unit (of around 150MB each). The v3 specification (released last year) brings a couple of important new features to improve this:

-

Sharding a single physical file can contain many logical chunks, indexed by an inline index. This means a client can issue one HTTP range request to get the shard index, then a second byte range to get exactly the chunk it needs. Without sharding, every logical chunk would be a separate file, and so reducing our minimum pixel size to save on downloads for small ROIs (e.g. for mobile devices) would be impractical and involve 100s of millions of tiny files.

-

Codecs formalise the chain of compression, transposition and serialisation applied to each chunk. Sharding is one such codec, and we also use Blosc/Zstd for all arrays, which gives us reasonable compression ratios on the int8 embeddings. We're never going to get amazing compression ratios of the TESSERA embeddings because they are high entropy (we reduce 1000s of dimensions into 128 during the training and inference process), but there's still some win to be had.

The Python zarr library has reasonably solid v3 support now, and in my tests the wider ecosystem such as xarray, dask, rioxarray can all read these v3 stores without issue. So I think we're good to use v3 features now!

2 The store layout

Each embeddings year gets a single Zarr store. Within it, each UTM zone is a group that contains that particular strip of the planet's embeddings and scales. For clients to visualise what's going on, there's an optional global RGB preview group:

2024.zarr/

zarr.json # root: version, year, conventions

utm29/ # one group per UTM zone

embeddings # int8 (H, W, 128)

scales # float32 (H, W)

rgb # uint8 (H, W, 4) [optional]

easting # float64 (W,)

northing # float64 (H,)

band # int32 (128,)

utm30/

...

global_rgb/ # EPSG:4326 preview pyramid

0/rgb # uint8 (H, W, 4) level 0

1/rgb # level 1

2/rgb # level 2

...

Using one store per year rather than per zone allows us to use an experimental

consolidated metadata feature.

A single zarr.consolidate_metadata() call gives a client the full catalog of

zones, their spatial extents, and which arrays exist. This (I think) eliminates

the need for the Parquet registry

we currently maintain for TESSERA.

Like the current npy embeddings, each zone group carries their own CRS to minimise

coordinate skew. Each zone has a proj:code attribute

(e.g. EPSG:32630) and a spatial:transform giving the affine matrix.

Southern hemisphere zones use the canonical northern-hemisphere EPSG code

with the 10,000,000m false northing subtracted, so the northing axis is

continuous.

Coordinate arrays (easting, northing, band) are small 1-D arrays

stored alongside the data, so an xarray open_zarr just works with labeled

axes. These are labeled as dimension names

so that xarray or other clients can pick them up automatically.

3 Sharding and chunking

The TESSERA embeddings are quite large in aggregate, and that is where most of the design time went. TESSERA clients have three very different access patterns:

- A single-pixel lookup or a small region-of-interest means that a user has a lon/lat and wants the 128-d embedding vector at that point. This should be ~2KB over HTTP. This might be a mobile device aiming to do active learning, for example.

- A regional subset means that a user wants a spatial rectangle (say, 100km2) of all 128 bands. This should stream efficiently without reading the whole zone and mosaicing it in memory (a source of memory problems currently). This might be a desktop analysis, or even a satellite scanning a region.

- A scan of entire countries to do global analyses, which requires terabytes of downloads to retrieve the full set of embeddings.

We will come back to solve the third 'entire countries' problem later, via a new variant of the model we are training that uses Matryoshka embeddings. However, the first two also pull in opposite directions but are needed for mobile clients vs chunkier desktop analysis tools. Zarr v3 sharding resolves both by letting us create shards:

- Shard: 256 × 256 pixels (aligned to tile boundaries)

- Chunk: 4 × 4 pixels (inner chunk within each shard)

Each shard is a single file on disk or object in S3, containing a grid of 64×64 inner chunks plus a ~32KB shard index at the end. To read a single pixel the client has to:

- Fetch the shard index via one HTTP range request of ~32KB, which is cacheable.

- Compute which 4×4 inner chunk contains the pixel.

- Fetch that chunk with another HTTP range request that's ~2KB for int8×128, and can reuse the previous HTTP connection via pipelining.

For a single pixel read, there's a bit of extra overhead from the index, but tolerable. For a regional read, the client fetches whole shards and gets contiguous 256×256 blocks, which is efficient for downstream processing.

Another neat thing about Zarr is that we can have multiple data type arrays. TESSERA uses a quantisation trick to compress the embeddings with a 'scale' array, which is a float32 held alongside the 128-dimension int8 values. For this array we also use the same 256/4 sharding, and also signal that there's no data via a NaN scale. This lets us skip the need for the landmask TIFFs we currently maintain.

After this the global RGB preview is plain sailing as its more like a conventional visual map tile, and uses plain 512×512 chunks with no sharding since the pyramid levels get small quickly and the access pattern is always tile-aligned for map rendering. These previews can also be reprojected by the client for dynamic maps.

4 GeoZarr conventions

The GeoZarr spec is still under active development, and has a conventions mechanism where stores declare which metadata schemas they follow. We use three:

-

proj: is the CRS information formerly held in our landmask TIFFs. Each zone group carries a

proj:code(e.g."EPSG:32630") andproj:wkt2for the full WKT2 string. -

spatial: is the affine spatial coordinate transforms. Each zone group has

spatial:transform(the 6-element affine),spatial:dimensions,spatial:shape,spatial:bboxandspatial:registration. This can be calculated fairly easily from the CRS, but included here so that clients that know the spatial Zarr convention can just query this and use it directly. -

multiscales is the pyramid layout for the global preview, compatible with the approach used by topozarr.

These conventions are registered in the root zarr.json attributes as an array, following the ZEP for conventions. This makes the stores more self-describing as any Zarr-aware tool can read the conventions list and know what metadata keys to expect.

In order to join the Zarr specification party, I've created zarr-convention-tessera to crystallise the conventions I've used in TESSERA, such as the utm zone splitting and quantisation bands. Once we're happy with this format, existing libraries like geotessera and also the upcoming OCaml geotessera can all switch to Zarr streaming instead.

5 Building the TESSERA Zarr stores

The geotessera-registry CLI now has commands in development for this pipeline.

It's unfortunately a very computationally heavy job, since the input npy tiles have to be rearranged and rewritten one by one into the

Zarr format, and then the RGB pyramids calculated. This is reasonable to parallelise, but we're a little stuck on our university

storage cluster due to relatively slow network interconnects at the moment.

Figuring out this conversion bottleneck is top of my list next week; in particular, if you have any leads on a cloud storage provider that may like to sponsor a petabyte or two of S3 storage, I'm all ears!

One other useful thing is that we generate a STAC catalog that provides a standards-compliant discovery layer: one STAC collection per year. This lets us use tile servers and hopefully eventually STAC-D pipelines to integrate these into planetary computing pipelines.

6 What's next

We won't stop serving the npy files for some time, since we have a number of users already committed to those, and that workflow is fine for regional analysis. However, I'm keen to unlock mobile workflows as there's a lot of demand for this (especially after the TESSERA hackathon in Delhi), so we'll push forward with Zarr. In particular thank you to Deepak Cherian for giving me lots of Zarr advice on our Zulip channel.

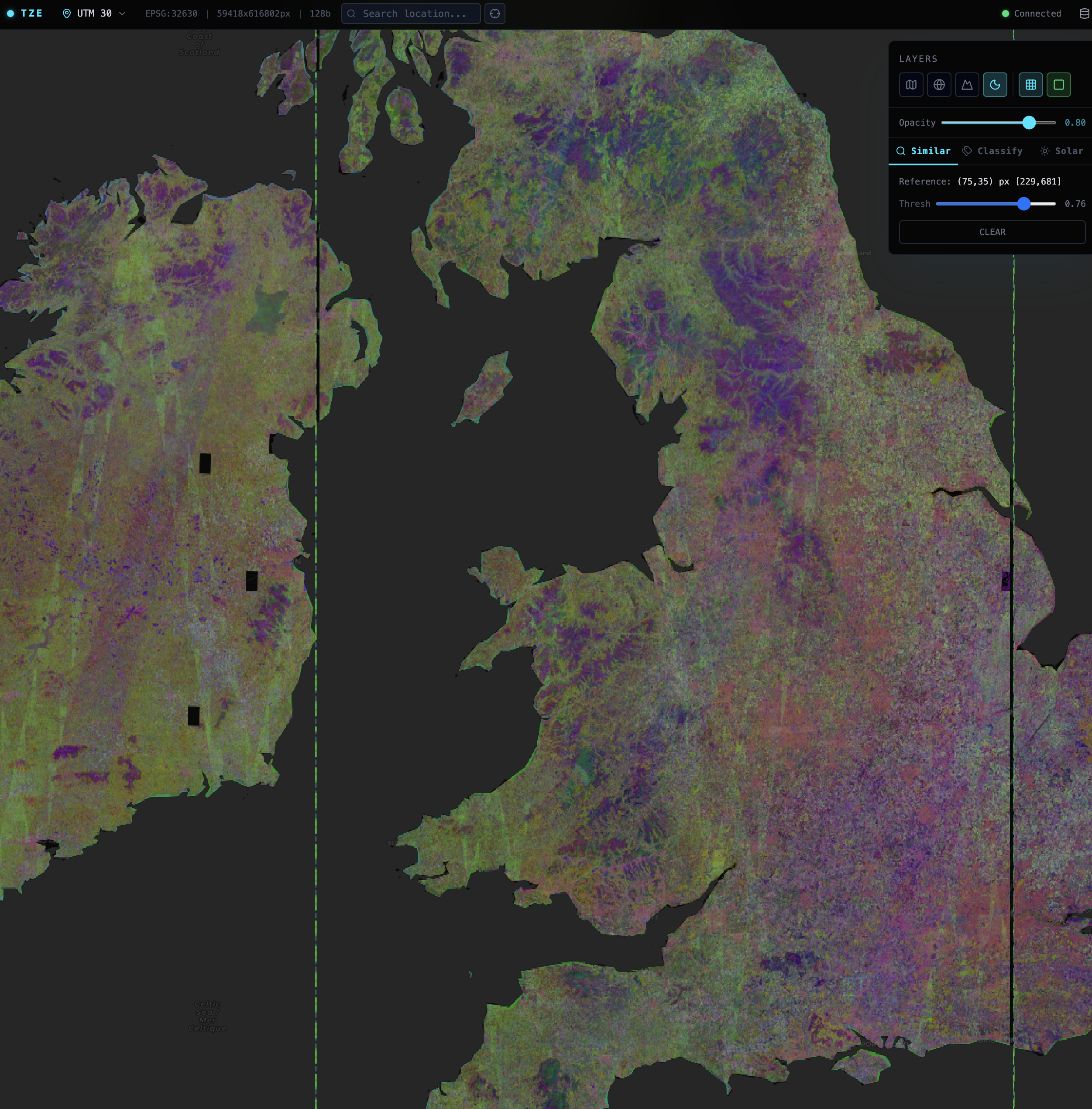

While this spec is out for review, here's a sneak peek of a TESSERA Zarr web viewer that reads directly from the Zarr stores into the browser, with no server required. I'm also working on an access library in OxCaml using Mark Elvers OCaml Zarr library so that we can use these from our native pipeline too. This would also make it much easier to integrate TESSERA into the biodiversity monitoring standards framework that we've been working on.

I also discovered that there had been a very relevant vector embeddings hackathon held a few days ago at Clark University. They came up with a STAC for embeddings proposal that I've left an query on as well, to make sure our work is compatible.