I've been on leave this week so not much coding, but I did progress some threads from last week which I'll update on!

1 TESSERA+Zarr take 3

After I published the TESSERA Zarr conventions from last week, I got a load of useful feedback from other, more experienced Zarr heads.

Issue #1 convinced me to rearrange our layout fundamentally to include the embedding year as a dimension, and also to reduce the chunk size to make the shard/chunk coarser but still reasonably lightweight. The main problem with adding in the year as a dimension is that it's difficult to prepend years without rewriting the store, but the obvious answer is to zero-fill the entire store and then selectively replace years as they are generated.

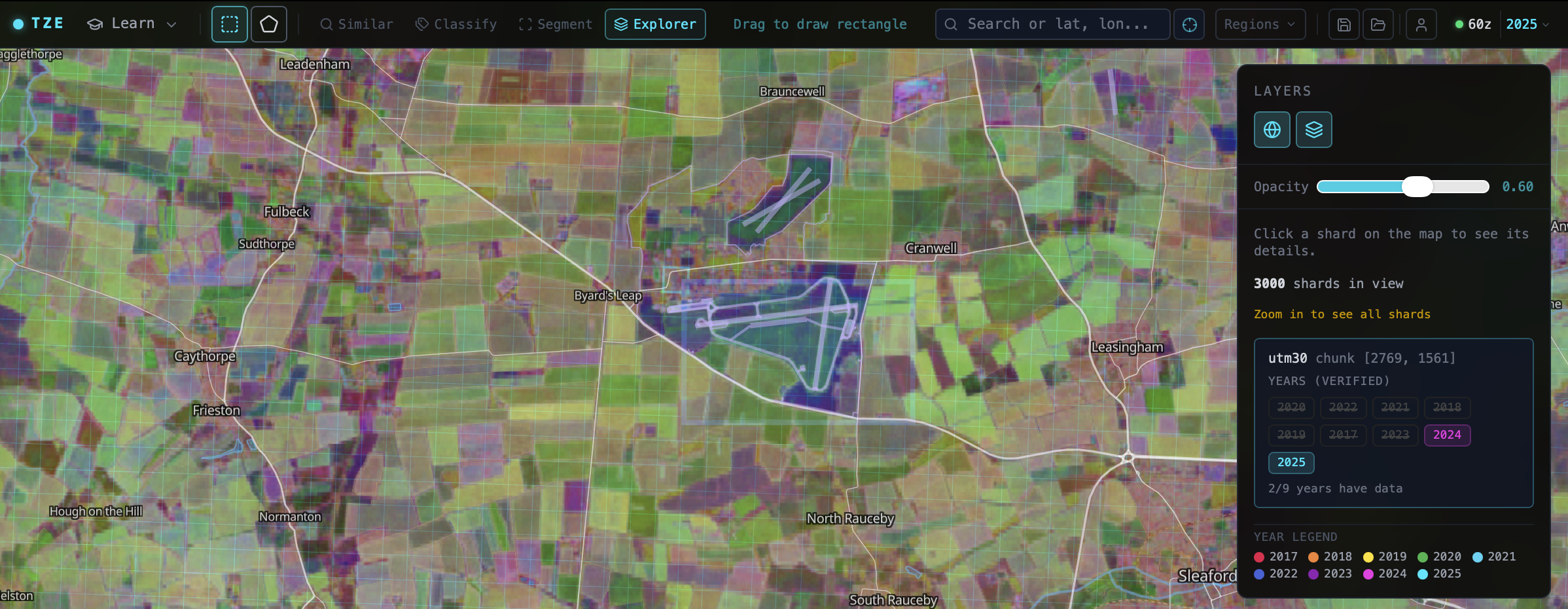

So that's what I've done: I've updated the Zarr store PR with three registry commands to zero-fill a new store, to add npy tiles to a particular year/utm, and then to calculate rgb multiscale pyramids as before from the whole store (but just from the latest year for now, since this is just for visualisation). I also adapted the TZE viewer to support this and it's working nicely at the bigger chunk size: still reasonably lightweight for mobile use, but also much faster to download with fewer HTTP range requests. Thanks to Len Strnad and Jeff Albrecht for their inputs.

I missed a very relevant hackathon at Clark University held last week about geo embeddings, but they created a great embeddings-stac-specification repository to start to connect STAC with vector embeddings. We had a great discussion about what makes the TESSERA QAT-compressed embeddings different here, resulting in a PR to fix it. I also opened up a fix to geozarr-toolkit for its use of Zarr conventions (the exact string name is important!), and now the third iteration of my Zarr store should hopefully be compliant on the Zarr web inspector!

My next step is to port the geotessera examples to use xarray to stream via Zarr (probably with a thin layer for dequantisation) in the Python library, and then look at the OxCaml implementation so I can get a clean-slate version going (essential for my own understanding of how the whole stack works).

The https://geotessera.org site launched last week seems to have gone down very well, but I'm now tarpitting on figuring out how to automatically post to LinkedIn pages. I tried IFTTT quickly which only supports personal accounts, and then Buffer which is super complicated, so now I'm reverse engineering the app API. How can posting to the socials from an Atom feed be this complex??

2 Springer letting us download papers, but not really

Over in the evidence TAP world, we're continuing to make the paper downloader work well. Springer has announced a premium API that costs big bucks, but Cambridge has decided to pay it so we can continue downloading. Unfortunately, this doesn't give access to fulltext PDFs, only an XML version that requires a lot of work to get the figures and reconstruct the original author intent. Apparently they can't do this because of the technical impossibility of serving so many PDFs.

Wiley has also launched an AI gateway for their works:

Wiley AI Gateway platform, infrastructure that connects AI agents directly to publisher-authorized scholarly content. Think of it as creating a bridge between your researchers' AI tools and vetted academic sources. What it does:

- Connects AI agents (Claude, Mistral, and others) to 3 million Wiley articles optimized for AI retrieval, with plans to expand to diverse scholarly content from multiple publishers and sources

- Provides institutional visibility and governance over AI content usage

- Ensures publisher-authorized, copyright-compliant access

- Works immediately with MCP-compatible AI tools

Still not entirely relevant for us, since we just want the full text in order to do our own reproducible and human-in-the-loop annotations, so we'll have to see if all these new products make it even more hostile for us to just get the original science that we need.

We also took part in a few events on evidence synthesis; the Department of Energy Security & Net Zero, and Sam Reynolds lead a robust discussion at a panel hosted by Nature. We're going to continue to see very rapid movement in the coming months in the world of evidence synthesis. Alec Christie pointed out the Evals Consensus consortium as well; it's nice to see collaborative groups forming all over the world.

3 OCaml development

After Patrick Ferris wrote up his thoughtful vibe coding etiquette a few weeks ago, I've been introspecting my own mood when it comes to open source maintenance. I definitely got grumpy when I read this title on the Discuss forums, so I need to discover my more constructive side again. Thomas Gazagnaire tells me that he's been having great success with a monopam fork (with a rewritten git layer) that makes it work much more efficiently on large git subtrees, so I'm going to try this out to see if it makes balancing (and separating) AI content from human-written code easier. I need to write some performance-sensitive code for Zarr, which still needs to be done by hand!

I really enjoyed Andrew Nesbitt's post on git remote helpers which have sparked off all sorts of subversive thoughts about defining custom git endpoints using Irmin or Tangled. This seems ripe for a bit of ATProto magic to resolve DIDs and perhaps even hide the PDS handle (so you can just git clone at:anil.recoil.org/monopam rather than having to know my own PDS URL is git.recoil.org.

4 Fun links

- I read this paper on predicting biodiversity using forest structure complexity and greatly this way of predicting alpha animal diversity.

- I failed hard on the PL Slop or not test.

- I enjoyed the bling WebGL shaders gallery

- I resisted getting distracted by lidar visualisations after seeing Michael Dales hacking!

- I digested pcodec a bit, which losslesly compresses and decompresses numerical sequences.