Following the US, China, France and India meetings, my policy bingo card continues with a Royal Society meeting on AI in Science with the European Commission. I discovered that the EU Delegation to the UK has an office in London that is much reduced since Brexit, but encouragingly still an anchor for opening EU-wide policy conversations with us.

The session was co-chaired by Professor Alison Noble, Foreign Secretary of the Royal Society (who I went to China with back in 2023) and Maria Cristina Russo, the Deputy Director-General for Innovation, Prosperity and International Cooperation at the European Commission.

1 Framing from the chairs

It was Chatham House rules so these are my overall notes paraphrased without attribution, aside from the chair's opening remarks.

1.1 The UK perspective

Alison Noble opened by observing how much confusion still surrounds what the term "AI" means in a scientific context; when broken down it can refer to matters of reproducibility, how we access data, how we train the next generation of scientists, and how we ensure what we publish is high quality (as defined by a peer review bar).

The Royal Society published its report on Science in the Age of AI back in 2024, and since then it continues to engage on multiple fronts; e.g. with UNESCO's open science work, international summits and bilateral dialogues like this one. She stressed that the role of human judgement in AI-generated science remains crucial. "AI in science" is also an inherently global rather than in a local silo, and a form of "team science" given the scale of infrastructure and data required these days.

1.2 The EU perspective

Maria Cristina Russo then laid out the new European AI Strategy for Science, which proposes to set up a "CERN for AI" via a set of physical institutes working at the intersection of AI and science. Her guiding principle was a commitment to trustworthy, human-centric AI.

The strategy is being delivered through "RAISE" (Resourcing AI for Science in Europe), organised around four building blocks (infrastructure, data, funding and talent) with the explicit aim of mobilising the private sector for public benefit across all of them.

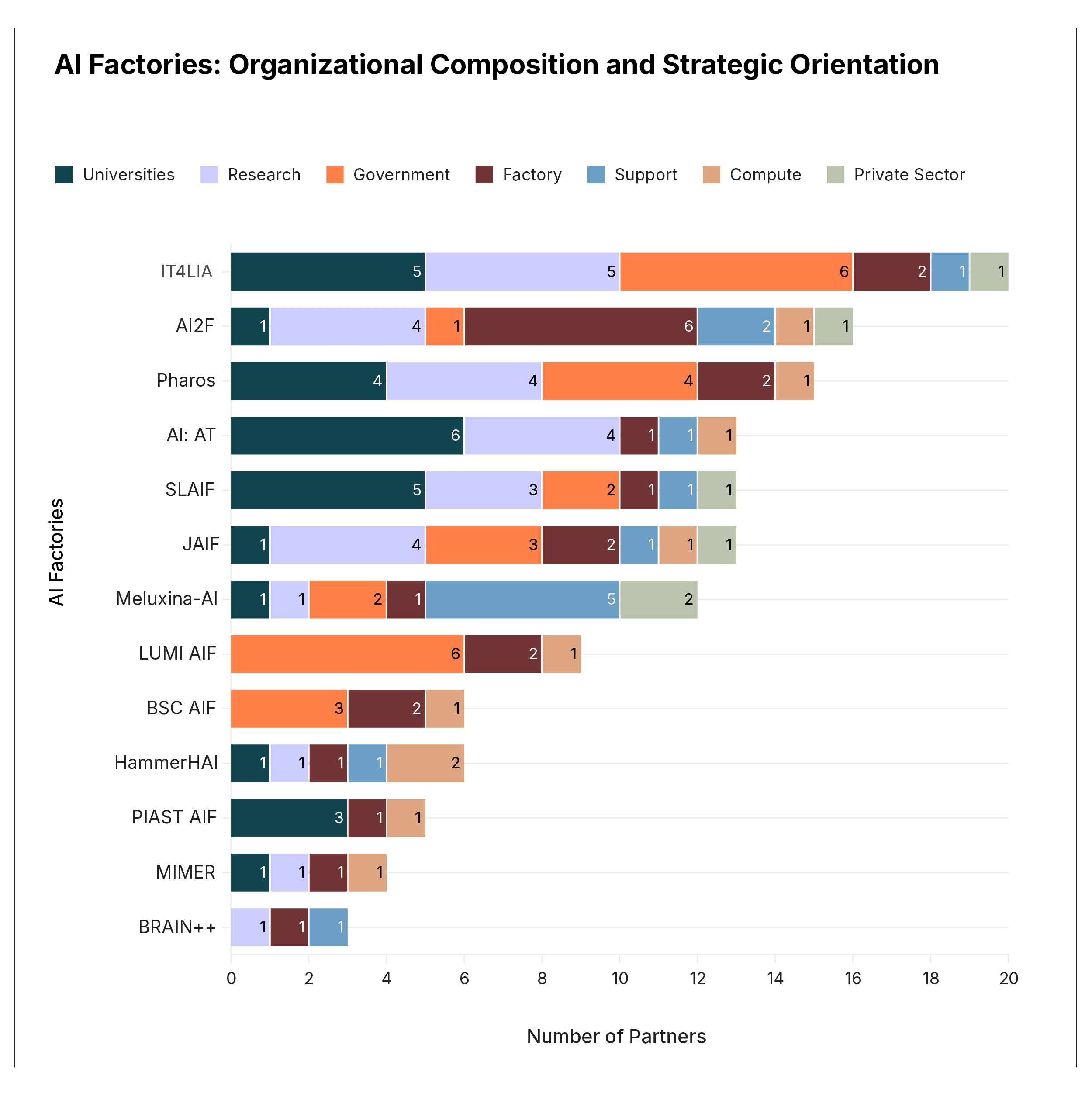

On the infra side, the strategy is tied to the new AI "gigafactories" across Europe (five of them, backed by a €20bn InvestAI facility), with €600M of Horizon funds allocated via pilot calls in the 2026-27 work programme so scientists (and not just industry) get good access to them. I learnt more about this from an Interface policy brief that was published last year.

Alongside the physical institute the goal is to grow talent networks across the EU. Next month (I think?) a new pledge mechanism will launch to mobilise philanthropic contributions to support AI in science. Maria closed by observing that there is no better time than now for the European continent to be geopolitically united around this agenda, given the exciting progress being made across so many scientific disciplines. But we have to do so responsibly, which is precisely why meetings like this one matter.

2 Discussion

There were five structured questions posed to the attendees, so my prepared responses around them now follow along with notes of the discussion. We never got around to the last few questions in a structured way, so just read the notes as a stream of consciousness...

3 How do we ensure AI strengthens scientific excellence, integrity and collaboration across borders?

I opened with the bad news that AI-poisoned literature is a serious integrity threat to science. LLM-generated papers are already passing peer review, and evidence synthesis suffers badly from a recursive feedback loop if it is blindly recursively trained on AI outputs.

The good news is that human experts can remain in the loop, but the right scaffolding is needed via data-exchange protocols that can be independently checked rather than building large central regulation. Conservation Evidence vetting, iNaturalist crowd-sourced verification, CITO citation typing, and DOI/ORCID/Zenodo provenance chains all work across borders today. We should be proactively boosting the "human-to-human verification" fabric, not just the human-to-AI and AI-to-human directions that currently get most of the attention.

My two asks towards this are:

- Primary observations must stay separable from AI-derived products, or long-term baseline trends become unrecoverable. We made this as a recommendation for biodiversity in our recent PNAS paper.

- Cross-border replication by different groups is also an integrity mechanism in itself. For example, we are publishing our TESSERA embeddings across multiple sites (Cambridge, the Swiss datacube, Indian mirrors and soon to the OpenGeoHub) which is a cross-check that our embeddings haven't been silently tampered with or biased by any one group.

The discussion picked up on the EU Sentinel/Copernicus programme as a good example of both the strengths and the weaknesses. There is enormous long-lived data production, but difficulty tracking what data was used to generate what, which algorithms were applied and who verified which step.

The group agreed that one of Europe's strengths is that its large-scale scientific data programmes already have mature human-to-human collaboration structures in place, which is an excellent bootstrap for the verification fabric above.

The SCIANCE pilot[1] of RAISE is mapping out the research and science agenda from the ground up, with open calls for community participation. The current call (fundamental biology and environmental sciences) has just closed and plans to run open workshops to capture expertise. RAISE is also explicitly about the combination of AI and science, not AI applied to science.

Biology is one field that has shared data on publication since the 19th century, implemented at scale by places like the European Bioinformatics Institute, which has worked across borders with the NIH to shape how international databases are run. The hard transition now is towards clinical datasets, which are difficult to anonymise and secure. Europe has a lead here thanks to the sophistication and curation quality of its bioinformatics datasets built over many years.

4 Responsible AI as an enabler of trust and quality, rather than a brake on discovery?

Sunlight remains the best disinfectant when it comes to misapplication of AI in science. We must boost transparency and peer review capabilities, potentially using AI. The difficulty is that there's so much AI generation throughout the scientific pipeline now (from papers to source code to even the reviews) that it's difficult to know where to start!

One approach to help keep up with this is to add machine-readable AI provenance via voluntary disclosure protocols. We're seeing this already hit the policy space via EU AI Act Article 50. Such disclosures would let good science move faster by letting reviewers triage attention rather than block submissions wholesale due to suspicions of being generated. What's also clear is that useful AI models need dynamic and recent data and benchmarks. Therefore, we should back AI-driven and traditional methods together rather than forcing an either/or situation and starving traditional science out of the equation too hastily.

For example, our TESSERA research is, we believe, an example of responsible AI accelerating discovery at scale through openness. We've made the complete training and inference pipeline open and reproducible, and also generated global embeddings once and mirrored those openly too. This allows downstream scientists a huge productivity gain on their tasks such as biodiversity assessments, but without needing a lot of AI domain expertise. Accessibility doesn't necessarily mean being given a giant set of GPUs, but a useful data product.

More culture change is needed in some disciplines like engineering by doing ethics upfront as we do with clinical science. This is especially true for AI outputs that don't have an underlying statistical model to reason about. This connects back to the excellent FT piece last week on RCTs and critical thinking.

We must also not treat AI systems as monolithic, as compositionality matters both across AI models and also between AI and non-AI (physics, maths, simulation) tooling.

4.1 Healthcare as a special/difficult case

It was generally agreed that healthcare is a special case that is very distinct from the remote sensing example I brought up. It's difficult to move clinical data across borders, but federating the compute to the data instead is very expensive and makes working across models hard. Original research on cross-border clinical federation is needed. Next-generation workflows and hardware including trusted computing are probably prerequisites for the infrastructure needs.

Healthcare trust ultimately has a human judgement element involved, but engineering often doesn't, since the end-user context is what defines "responsible". We've historically underinvested in the evaluation of engineering outputs as they become capable. The comparative assessment model (CASP for protein folding, "not a competition") was raised as an example worth emulating in other fields, so that we can have more benchmark data.

5 UK–EU lens: a shared interest in high-quality, interoperable, AI-ready scientific data

Psychology has had a well known replication crisis, but AI has moved so fast we've not had a chance to do any meaningful replication yet. The barriers are high since replication studies in these fields require GPU access and training data at costs that are out of reach for most labs. The other problem is that academic careers are rarely built on replication, and so it could be that learned societies are the right venue to host longer term replication programmes.

On a smaller scale, it's possible to pitch a replication study to a journal and let them decide if it's interesting. Big Tech getting invested in AI for science is welcome but it comes with a skewed perception of the true costs.

On a more practical note, the scale of trusted primary observations is a policy problem since managing that storage requires a lot of work. TESSERA is ~1 PB/year and growing and continued investment is not tractable without federated infrastructure. JOINER-style dark fibre between UK institutions, and equivalent EU/Swiss links, are important to shift this data around the EU.

AI poisoning is also a cross-border externality, since one contaminated training corpus in one jurisdiction poisons derivative science everywhere. Therefore any AI-ready scientific data must come with aligned provenance standards; this happens in clinical data already but not usually in less sensitive fields.

"Open to read" is not "open to train on", and authors of these data sources need to provide granular consent for model training while keeping publishing financially sustainable. This is quite a common problem in (e.g.) forest plot datasets where the painstaking data gathering takes 1000s of people many decades of observations. Just slurping that up into an AI model training isn't good for anyone beyond very short term gains.

6 How can funders and institutions can best support the changes brought by AI in science?

- Treat AI-ready data layouts as first-class research outputs. The boring work of rewriting data into Zarr, STAC catalogues, multi-registry clients, provenance metadata, and so on is what makes computational science tractable. Funders should explicitly reward investment in responsible data infrastructure.

- Funding key producers once to let others mirror freely is also a good model. For example, TESSERA cost many GPU-years to generate but redistribution of the resulting map tiles is cheap by comparison. This is vastly more efficient than each country training its own foundation model from scratch.

- Recognise that free peer review is already broken by LLM polish. Decentralised reputation networks (Tangled/ATProto-style) are a plausible replacement, and funders should seed experiments. The NGI is a good example of this in the EU, but we need more!

- Honest environmental accounting of AI's compute and water footprint needs to be standardised across institutions so we can make cost/benefit tradeoffs.

7 Why UK–EU alignment on AI-in-science strategy matters

I was pretty surprised to find that the EU AI Act Article 50 will likely be live from August 2026 and will set the disclosure baseline for textual content that UK ecosystems will have to interoperate with. A coalition of the willing across the UK + EU also has the weight to challenge publisher hegemony over AI-training rights; UNESCO alone is too weak on its own.

(Didn't manage to take further notes about the discussions in this point)

8 Closing

The crux of the discussion wasn't really about whether to regulate AI together, but if building the federated scientific substrate that makes responsible AI possible at all. I am encouraged in my domain that TESSERA and the Evidence TAP are two examples of what it looks like in practice to be federated by design and resistant to AI poisoning precisely because no single entity controls them. However, it's obvious that science covers a vast amount of much more sensitive data (like in healthcare) where operationalising this is really difficult.

The encouraging takeaway from both chairs was that the UK and EU are already closely aligned on this agenda. They are pushing for trustworthy AI, investing in the scientific substrate (esp. the EU), and high-level advisory boards are being set up that will explicitly include UK-based academics as well as EU.

Thanks as always to the RS for inviting me along to this, and any mistakes in my very hasty notes above are entirely my fault!

-

When will we stop cramming 'AI' into every single acronym? AI am thinking its a bit cliched now.

↩︎︎