Over the last month, I've seen rumblings in various private chats about the capabilities of Anthropic's new frontier model, but I was genuinely shocked today at how fast things are moving. Anthropic's Mythos Preview (HN) has found a lot of new zero-days, but it's the effortless chaining of vulnerabilities that shook me. Mythos autonomously wrote a browser exploit that chains four separate vulnerabilities with a JIT heap spray to escape two separate sandboxes (the renderer and kernel). This is no mean feat, and the fact that this is autonomous means that finding these vulnerabilities is now essentially down to energy input.

The elephant in the room is that once a vulnerability is discovered, there are hundreds of millions of embedded devices all around our environment that cannot be upgraded easily, and will be running vulnerable binaries essentially forever. This was a problem before Mythos of course, but the ease of chaining vulnerabilities now means that an autonomous agent can link seemingly small errors across physical devices and bypass most digital protections in modern day living.

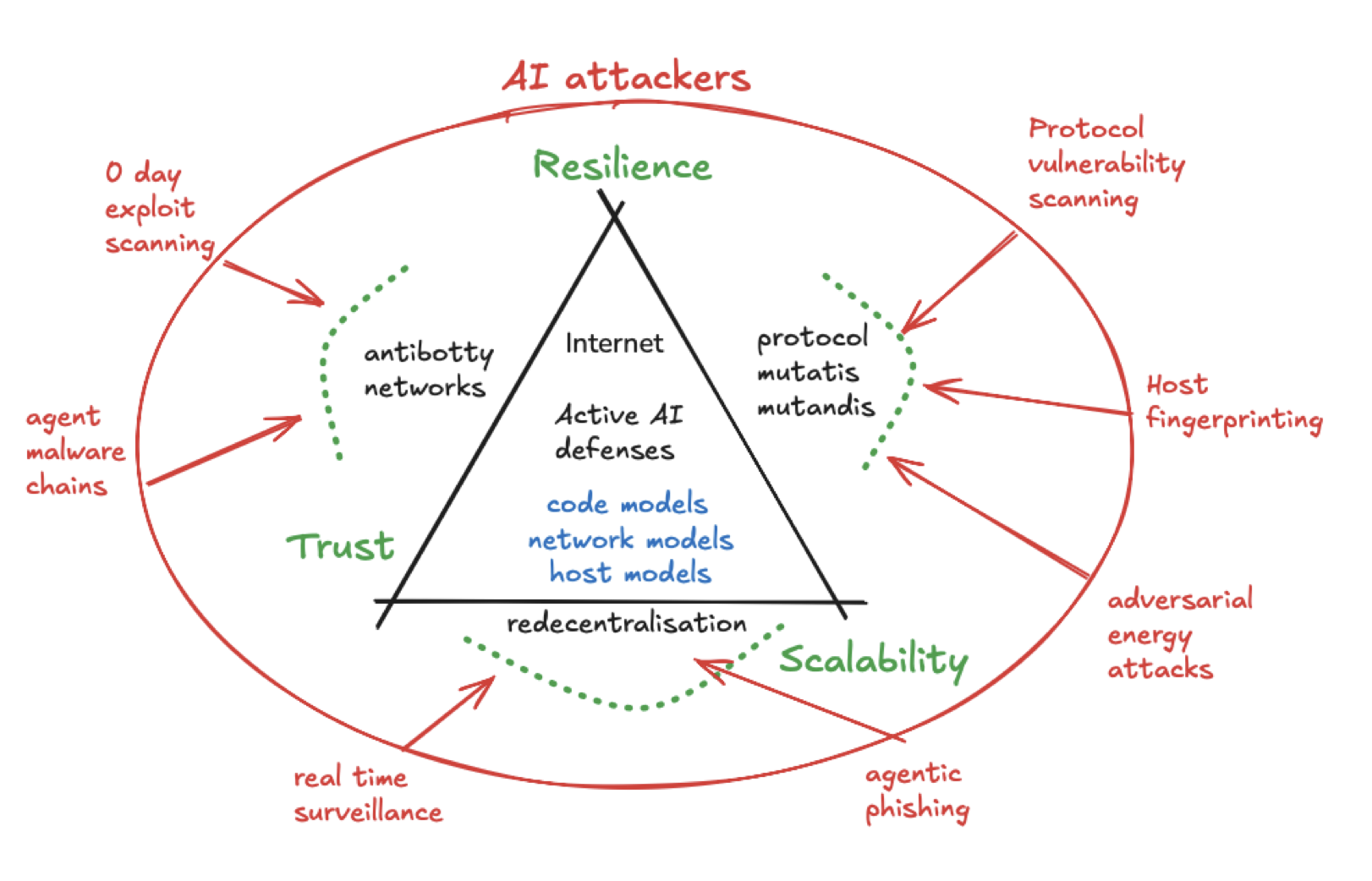

So are we totally screwed? Firstly, I applaud Project Glasswing as a good first step, but it's not enough. Computer science researchers also need to consider more radical options for the long-term sustainability of Internet connected devices. I wrote a speculative paper on Steps towards an Ecology for the Internet about this last year, but I never expected things to move quite this fast into building an implementation of those!

1 Antibotty inoculation defences

Firstly, we know that IoT devices are particularly vulnerable to getting hacked and exposing valuable private data/access. Given these devices are physically spread throughout society and often running outdated software, the only practical short-term defence I can see is to use frontier AI models to generate beneficial attacks to inoculate older devices via remote exploits. I dubbed these "antibotty networks" in our internet ecology paper last year as a nod to how antibodies in biological systems work very similarly to digital botnets.

The famous "How to 0wn the Internet in your spare time" 2002 paper explained how a worm could hit most hosts on the Internet in 60 seconds. Given how fast the bad guys are, the only way to outrace them is for us to have physically local defences that hear about a vulnerability and apply the exploit to nearby hosts in seconds. Microsoft proposed Vigilante back in 2008 to allow security alerts to outpace worms on a network.

We've had the technical capability for this sort of thing for years, and yet we centralise our defences into brittle and poisonous IT scanners rather than deploying more agile, local solutions that are tailored to the environment they run in. And remember that AI models are amazing at decompilation and working directly in binary formats so they don't need the source code of your random router or pacemaker to attempt to innoculate it.

2 Will formal specification and unikernels get us out of this?

KC Sivaramakrishnan conjectured to me this morning that the same capabilities that will make Mythos effective at finding vulnerabilities will make it possible to write and prove software correct. I'm not so sure. While AI will speed up proof specification dramatically, my instinct is that the possible envelope of vulnerabilities will always be ahead of what we can specify.

We will have a well specified core (let's say, memory safety and data race freedom), but a less well specified interface to some external service (let's say, timing side channels or powerline attacks against memory). A motivated AI attacker will chain attacks from the less proven pieces to find an information disclosure in the verified bit.

Are unikernels the way out of this by crunching our software layers together? Well, I obviously think they'll help because they can provide a way to eliminate leaky abstractions from runtime code. Even one of the big opponents to unikernels ended up using the same library OS techniques in the end:

[...] instead of merely relying on marginally better implementations of dated abstractions, we are eliminating the abstractions entirely. Rather than have one operating system that boots another that boots another, we have returned the operating system to its roots as the software that abstracts the hardware: we execute a single, holistic system from first instruction to running user-level application code [...] -- Bryan Cantrill, Oxide, 2022

And indeed, the great refactor was a credible proposal last summer to rewrite 100 million lines of code into Rust by 2030. The big benefit of unikernels+proofs here is that the well specified portion of the software you're running can really turtle up against attacks and update itself fast. However, these systems will always be vulnerable to another layer below or above being less well specified, and those acting as a springboard for attacks by a determined AI attacker.

I find it hard to believe that we will formally specify all layers of hardware and software and roll them out into production...ever. A Fire Upon the Deep's software archeologists are a profession that's here today and not a scifi trope. So... back to the question of if we're screwed when it comes to long-term security. Not just yet, I think!

3 Build in mutation diversity to our software stacks

The idea germinating in my head is that we could build self-mutating software systems. We typically have multiple levels of specification precision in real world software. Many researchers are thinking about the fully formally specified core: we can use AI to build the proofs and stare at trusted kernels really hard (say for cryptography or protocol state machines). A lot of people are working on this, so it's all good, so I don't think it needs me to think about it much.

But then we get to the implementation defined stuff: once we prove something, it's usually then translated into something unproven (like Rust or OCaml) to execute. This is where my systems Spideysense tingles, since there are lots of ways to interpret a typical spec. So how about instead of taking the same choices here, we fuzz at build time and end up with millions of implementations that are all subtly different, but that still obey the same core specification? It might sound a bit mad, but it forces you to have a good test suite, but also the opportunity to serendipitously discover some positive (say, performance-related) feature that is outside of your formal specification envelope.

Since I'm a researcher, I'm thinking about how to push this argument to the extreme. We could have slightly different ways of (say) expressing a state machine, or the ordering of a packet, or edge cases for parsers that make slightly different choices where choice is acceptable to the proven core. This diversity will drive up the costs of re-using attacks. Let's call this 'stochastic code generation' to make it sound fancier, but we already have plenty of heuristics for this sort of thing in, say, non-deterministic schedulers in GPUs. Why not drive it further up the stack using coding agents?

4 Getting digital immune systems for our own lives

This is all inspired by biological systems where mutation happens all the time. It's remarkable how much discipline there is in the biological development of any organism as it grows, and yet room for diversity as well! Each digital host on a network could act as a T-cell scanning its neighbours for vulnerabilities before the global botnets reach them, and any replicated service mutates itself a bit every time it redeploys.

Anthropic's Project Glasswing is restricted release to trusted security partners. This is the right call for them to make, but I'm a little grumpy I don't have access to help maintain my own open source code! So while I twiddle my thumbs, I encourage everyone to start brainstorming what biological diversity might look like as an ecosystem-wide defence across the whole Internet. I mentioned that I'll be speaking at a workshop on Rewilding the Web in Edinburgh on May 28th/29th about this, and this topic seems more relevant than ever!

4.1 Updates

Some entertaining followups to the post, like Justin Cormack noting:

I know someone who maintained a widely used piece of software with a vulnerability in older versions that was unpatched on millions of devices. They really wanted to patch it to fix the bug, and could have done, but their lawyer advised them that this would not be legal...

Issuing updates to other people's devices is such a gray area, especially when there's a moral argument with a known vulnerability...